Games are very important for children to develop creativity, imagination, and cognitive strength. Games are also important in the world of science, where many discoveries were inspired by them (including the whole discipline of Game Theory). Games have also been prominently featured in the world of art through literature, music, paintings, and films. I find that one of the most evocative depictions of a game is in Ingmar Bergman’s film “The Seventh Seal” (1957) where a Swedish knight, Antonius Block, returns to his native country from the crusades and challenges Death to a game of chess. The stakes are high. The prize is life itself.

Within the field of artificial intelligence games also have a significant role. Two games in particular are of critical importance: chess and Go.

Chess is a strategy game played by two opponents in a checkered board consisting of 64 squares, which alternate in color between black and white. Each player has 16 pieces (king, queen, 2 rooks, 2 bishops, 2 knights, and 8 pawns) which are positioned in the board when the game begins. Players subsequently move the pieces based on certain rules. The objective of the game is to cause a position where the opponent’s most important piece -the king- is unable to move (a checkmate). The complexity of chess is estimated to be 10120.

Go is also a strategy board game played by two opponents. Unlike the chess board, the Go board is composed of a 19×19 grid of lines creating 361 intersections. Each player has either white or black pieces called stones (181 stones for black, and 180 stones for white). At the beginning of the game the board is empty, and the players subsequently place a stone in the intersections of the grid. The objective of the game is to control more territory than the opponent, with the player controlling the largest territory winning the game. The complexity of Go is estimated to be 10360.

Both games have in common that they require advanced strategical thinking, both have been regarded as too complex to be cracked by brute force, and both have been considered to represent the pinnacle of human intelligence. With the advancement of technology and artificial intelligence (AI), matches have been arranged for both games where the best human player was faced against an intelligent machine capable of playing the game. The stakes in these matches, although not as high as in the game portrayed in the Seventh Seal, were still substantial: the human player was regarded as a defender of humanity, a protector of human intellect against the advancement of machines.

Deep Blue: the computer that was taught to play chess

Deep Blue (including its predecessor Deep Thought and its successor Deeper Blue) is a single-purpose computer build to play chess (and only chess). Its main creators were Feng-hsiung Hsu and Murray Campbell.

The creation of Deep Blue is an interesting saga in itself that lasted over 12 years, starting at Carnegie Mellon University’s department of Computer Science in 1985 and ending at IBM in 1997. Its claim to fame came from beating chess Grandmaster and -at the time- World Champion Garry Kasparov. Deep Blue and Kasparov played 2 matches, one in Philadelphia in 1996 which resulted in a victory for Kasparov 4:2, and a rematch in New York in 1997 which resulted in victory for Deep Blue 3 ½: 2 ½. The matches between Deep Blue and Kasparov were not themselves devoid of drama with cross accusations of unfair competition, cheating, and even espionage.

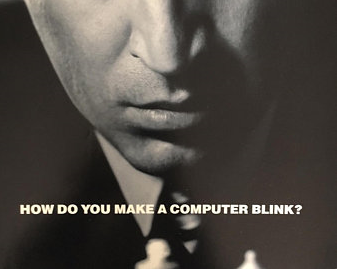

Official poster for the 1997 rematch between Deep Blue and Garry Kasparov

Deep Blue was built using general-purpose supercomputer processors combined with chess accelerator chips, complex search algorithms and evaluation functions, an opening book, and an endgame database. This was a brute force approach that relied on rules developed together with strong human players to try to account for every position that could occur during a game. Of course the speed of the search was critical as chess matches follow strict time limitations. Deep Blue was capable of analyzing 50 billion positions in the 3 minutes available for making a single move. Adjectives such as “intelligent”, “creative”, “god-like”, “subtle”, and “insightful” were applied to some of the moves made by Deep Blue.

The IBM team (who at a later stage counted with the collaboration of American Grandmaster Joel Benjamin) went even further on the preparation for the rematch with Kasparov and programmed Deep Blue to play some phycological games as well. As explained by Feng-hsiung Hsu: “Of course we also built in some tricks for Garry. For certain moves there was a delay or some moves it played immediately… This has phycological impact, as the machine becomes unpredictable, which was our main goal”. In fairness to both sides, this can also pose unique challenges to the human player. In the words of Kasparov: “A machine won’t get overconfident when it has a superior position or dejected when it is worse. A computer won’t tire during a tense six-hour battle, get nervous as its clock ticks down, or distracted, or need restroom breaks. Worse, knowing your opponent is immune makes it even harder to properly navigate your own nervous system when facing a machine”. And even further: “In humans, that is called sangfroid. And if you don’t have any sang, you can be very froid…”.

Deep Blue was without any doubt an extraordinary accomplishment. It is perhaps the best example of a “specific” intelligence, that is a system that can act intelligently, but only at one task: in this case, play chess (some might even speculate that Deep Blue’s scope was even narrower: to play chess against Kasparov). This should not be confused with the search for a “universal” or “generic” artificial intelligence.

AlphaGo: the computer that taught itself to play Go (and then chess and shogi)

AlphaGo was created by a team of researchers led by David Silver in DeepMind, an AI lab created by Demis Hassabis and later sold to Google. In 2016 AlphaGo played a Go match with South Korean Lee Sedol -considered one of the strongest players in the world- with a result of 4:1 in favor of AlphaGo. This event marked the first time in history a computer won a match of Go against a human adversary. To better illustrate the complexity of Go and the remarkableness of this achievement, the number of legal positions in the game is estimated to be larger than the number of atoms in the universe.

As eloquently expressed by Demis Hassabis, “of course winning was the main aim, but in fact the most important thing was the way AlphaGo won. During the match AlphaGo played many highly original, creative and beautiful moves, most famously move 37 in game 2, which in many cases overturned centuries of received wisdom. It wasn’t just regurgitating or copying human knowledge”. Lee Sedol said of the same move: “I though AlphaGo was based on probability calculation and it was merely a machine. But when I saw this move I changed my mind. Surely AlphaGo is creative”. Lee Sedol announced his retirement from professional Go playing in 2019: “with the debut of AI in Go games, I’ve realized that I’m not at the top even if I become the No. 1 through frantic efforts. Even if I become the No. 1, there is an entity that cannot be defeated”.

AlphaGo, unlike Deep Blue, used more advanced AI technology in the form of deep neural networks and reinforcement learning. Neural networks, which roughly mimic they way the human brain process information, are a technique which processes data through a model of interconnected (artificial) neurons that span multiple layers. Reinforcement learning, at its most essential, implies learning through trial and error, in this case by playing multiple games and learning from its mistakes. At first, it plays completely randomly, but over time the system learns from wins, losses, and draws to adjust the parameters of the neural network, making it more likely to choose advantageous moves in the future.

At this stage, despite their different methodologies, AlphaGo had in common with Deep Blue that they were both specific machines that could play only one game. The leap towards a more general machine took place with AlphaGo’s successors, most notably AlphaZero. AlphaZero, which can play Go, chess, and the Japanese shogi, was trained by playing only against itself, without an endgame database, without an openings book, and with no domain-specific knowledge other that the rules of the game. The amount of training the network needs depends on the style and complexity of the game, taking approximately 9 hours for chess, 12 hours for shogi, and 13 days for Go.

Man vs. machine?

One immediate conclusion from these tales is the massive progress AI achieved in the last three decades. Progress does not necessarily follow a linear trajectory, but it appears clear that AI is a powerful technology and we will likely be seeing many of its applications in the coming years.

There is another, perhaps subtler, point that could be extracted from these examples. Both the match between Garry Kasparov and Deep Blue and the match between Lee Sedol and AlphaGo have been regularly portrayed as a case of man versus machine. I cannot agree with this portrayal. At the end, it is not man versus machine but ultimately man versus man. As remarkable a feat it is for Kasparov to reach his level of mastery at chess and for Sedol to achieve his command of Go, it is as least as equally impressive for humankind to have built the artificial intelligence that defeated those minds.

Feng-hsiung Hsu expressed it clearly: “the match was never really ‘man versus machine’, but rather ‘man as a performer versus man as a toolmaker’. Whatever the outcome of the match, when we cheered for the winner, we were cheering for a unique human achievement.”

Furthermore, AI can provide an unprecedented opportunity for us humans to improve our knowledge. Both Kasparov (by proposing a combined style of chess where teams composed of both a human and a machine are playing against each other) and Sedol (by studying and learning from the moved made by AlphaGo and thus gaining deeper insights into the game) recognized this. And this concept does not end with chess and Go, it is applicable to any human endeavor. We might just be at doors of unprecedented knowledge advancement. Within this framework the machines are not a replacement for humans but a tool to help us reach higher.

The victories of Deep Blue and AlphaGo should count as a score for humanity.

Explore more about this topic:

* Behind Deep Blue: Building the Computer That Defeated the World Chess Champion (by Feng-Hsiung Hsu). This book can bit a bit technical at times but it is a first person account of all the process of building Deep Blue written by one of its creators. Click here to see book.

* Deep Thinking: Where Machine Intelligence Ends and Human Creativity Begins (by Garry Kasparov with Mig Greengard). Insightful book written by Garry Kasparov on his experience playing chess with Deep Blue and his thoughts on the future of artificial intelligence. Click here to see book.

* Game Changer: AlphaZero's Groundbreaking Chess Strategies and the Promise of AI (by Matthew Sadler and Natasha Regan). The book provides a great account on the creation of AlphaGo and AlphaZero. It also contains an analysis of many of AlphaZero’s chess games. Click here to see book.